The cross entropy is rising, the selected $a$ becomes so large, however the accuracy continues to rise (and then stops to rise). Here is an interesting thing: The plot looks like my initial problem. For this $b$, we can show the evolution of cross entropy and accuracy when $a$ varies (on the same graph). The $b$ minimizing the cross entropy is 0.42. Here, I plot only the most interesting graph. I selected a seed to have a large effect, but many seeds lead to a related behavior. I took the data as a sample under the logit model with a slope $a=0.3$ and an intercept $b=0.5$.

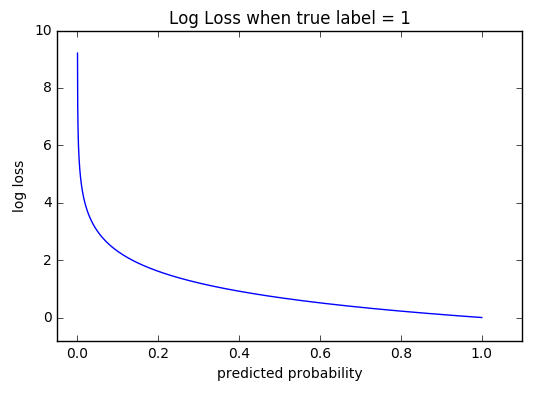

For this $a$, we can show the evolution of cross entropy and accuracy when $b$ varies (on the same graph). The $a$ minimizing the cross entropy is 1.27. In that case, the third point is far away from the cut, so has a large cross entropy. In the second case, the cross entropy is large too. Indeed, the fourth point is far away from the cut, so has a large cross entropy. In the first case, the cross entropy is large. Well, you can also select x=0.95 to cut the sets. This can be shown directly, by selecting the cut x=-0.1. The cut between 0 and 1 is done at x=0.52.įor the 5 values, I obtain respectively a cross entropy of: 0.14, 0.30, 1.07, 0.97, 0.43.Īfter maximizing the accuracy on a grid, I obtain many different parameters leading to 0.8. I have 5 points, and for example input -1 has lead to output 0.Īfter minimizing the cross entropy, I obtain an accuracy of 0.6. Illustration 1 This one is to show that the parameter where the cross entropy is minimum is not the parameter where the accuracy is maximum, and to understand why. However, we may expect some relationship between cross entropy and accuracy.

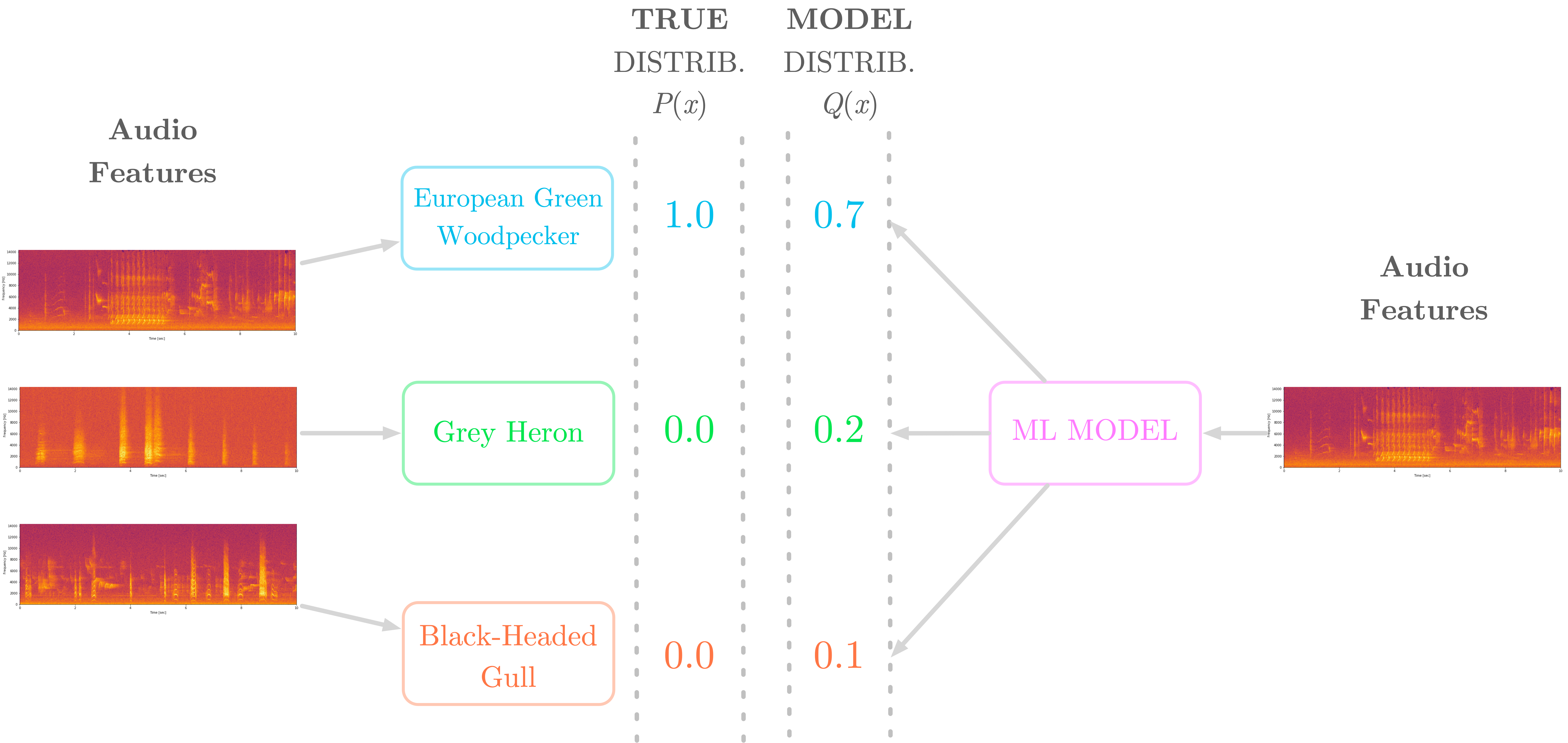

In general, the parameter where the cross entropy is minimum is not the parameter where the accuracy is maximum. In the following, I just illustrate this relationship in 3 special cases. To understand relationships between cross entropy and accuracy, I have dug into a simpler model, the logistic regression (with one input and one output). Red is for the training set and blue is for the test set.īy showing the accuracy, I had the surprise to get a better accuracy for epoch 1000 compared to epoch 50, even for the test set! Here the result of the cross entropy as a function of epoch. I have trained my neural network binary classifier with a cross entropy loss.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed